How to avoid AI disasters like “Shy Girl”

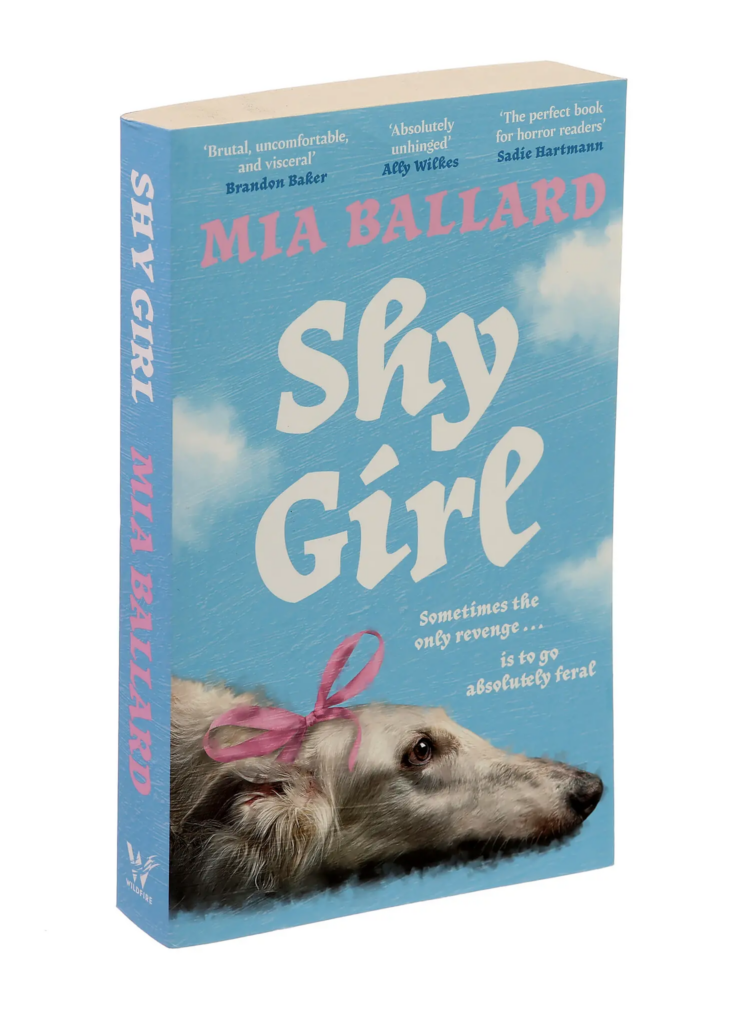

The publisher Hachette cancelled its planned US publication of Mia Ballard’s previously self-published horror novel Shy Girl because it said parts of it were apparently written with AI. Ballard denies it.

This has resulted in the usual round of finger pointing between authors, editors, and publishers. For an intelligent perspective on the whole affair, read Brooke Warner’s take.

Hachette says it’s blocking publication because of its commitment to “original creative expression and storytelling.” Bull. It’s cancelling because AI-generated content can’t be protected by copyright, so anyone could knock off the book without penalty. And, of course, because it’s too embarrassing.

How many authors use AI to write things?

According to the large-scale survey we completed last year on AI and the Writing Profession, 58% of nonfiction authors and 42% of fiction authors use AI at least sometimes. However, only 1% of nonfiction authors and 4% of fiction authors use AI to produce text for publication at least weekly.

How bad is that? Bad enough. First of all, authors who use AI to write things likely lied about it in our survey, or didn’t take the survey. Even if the survey is entirely representative, that means a few percent of authors are passing of AI text as their own. And that’s a problem. Speaking as an editor, I’ve seen a few significantly AI-generated manuscripts. If I’m seeing them, I’m sure editors in the publishing world are seeing them, too.

The simple solution

There is no perfect solution. But there is a way to make this kind of problem far less frequent.

Publishers and authors are hurting themselves here. Publishers who insist, like Wiley, that every use of AI in preparing a book must be disclosed, are completely unrealistic — AI is now woven into every Google search and Grammarly grammar check and is a perfectly legitimate tool for finding errors, for example. Such publishers are basically asking authors to lie, and authors will lie or fool themselves rather than lose a publishing contract. It’s a stupid system begging for abuse.

As I’ve said before, authors should fill out and share a checklist about their uses of AI. Here’s my latest checklist:

- Grammar checking (e.g. Grammarly)

- Web search (e.g. Perplexity)

- Transcription of audio interviews

- Summary of materials for research purposes

- Review text for consistency

- Generate first drafts of text content

- Generate outlines or summaries

- Suggests words or appropriate terminology

- Deep research reports

- Assess reading level

- Generate first-draft graphics

- Generate final graphics

- Clean up formatting or citations

- Upload project content to projects or repositories

- Generate titles and headings

- Use AI as a brainstorming partner

- Generate text intended for publication

Every publisher should require their authors to fill out this checklist, including indicating any passages that are taken verbatim or substantially from AI output.

Except for the final item, none of this AI use is disqualifying. By enabling authors to fairly disclose their normal AI use for tasks like generating titles and web search, the checklist will reduce the number of authors who feel they must deceive their publisher.

Editors should run every text submitted by an author through an AI detector (VerifyMyWriting is one of the best). Yes, I’m aware that such detectors can both miss things and generate false positives. But they’re one of the easiest ways to suggest when it’s a good idea to be suspicious.

If authors know that text is going to be run through an AI detector, they’re far less likely to try to get away with submitting AI-generated material.

A publisher should reject a manuscript or request changes in a manuscript if:

- The author volunteers that they created large amounts of text with AI.

- The AI detector flags large amounts of text as AI-generated, and the author cannot satisfactorily explain how they generated that text.

- The editor reading the text suspects, based on reading the text, that it is AI-generated, and the author cannot satisfactorily explain how they generated that text.

This process isn’t foolproof. But it would likely screen out most of the authors who were too lazy to write their own books (or who hired ghostwriters who were similarly lazy).

I find AI a useful tool and I plan to use your template for my own checklist. It’s a great idea. However, I would balk at generating graphics, particularly final images. Because any image is built on a library of content created by real artists, I feel it’s a kind of plagiarism. I’d rather reach out to an actual artist. But I wouldn’t be opposed to giving them a mock-up generated by AI as a way to communicate my ideas.

How, in this day and age, does a publisher get caught out like this? I see Sally Walker/Rayner Winn appears to have written a book before her first: again, how are these things not being checked?

If an author cannot come up with their own titles, write proper citations, write their own outline and/or first draft, or work with a team of people for editing and consistentcy, perhaps they are in the wrong profession, imo. I would not want to read something an author passed off as their own when it was AI generated, not worth the paper it’s printed on.

consistency, sorry….