The New York Times says Accenture gets $500m for Facebook moderation. Why is this news?

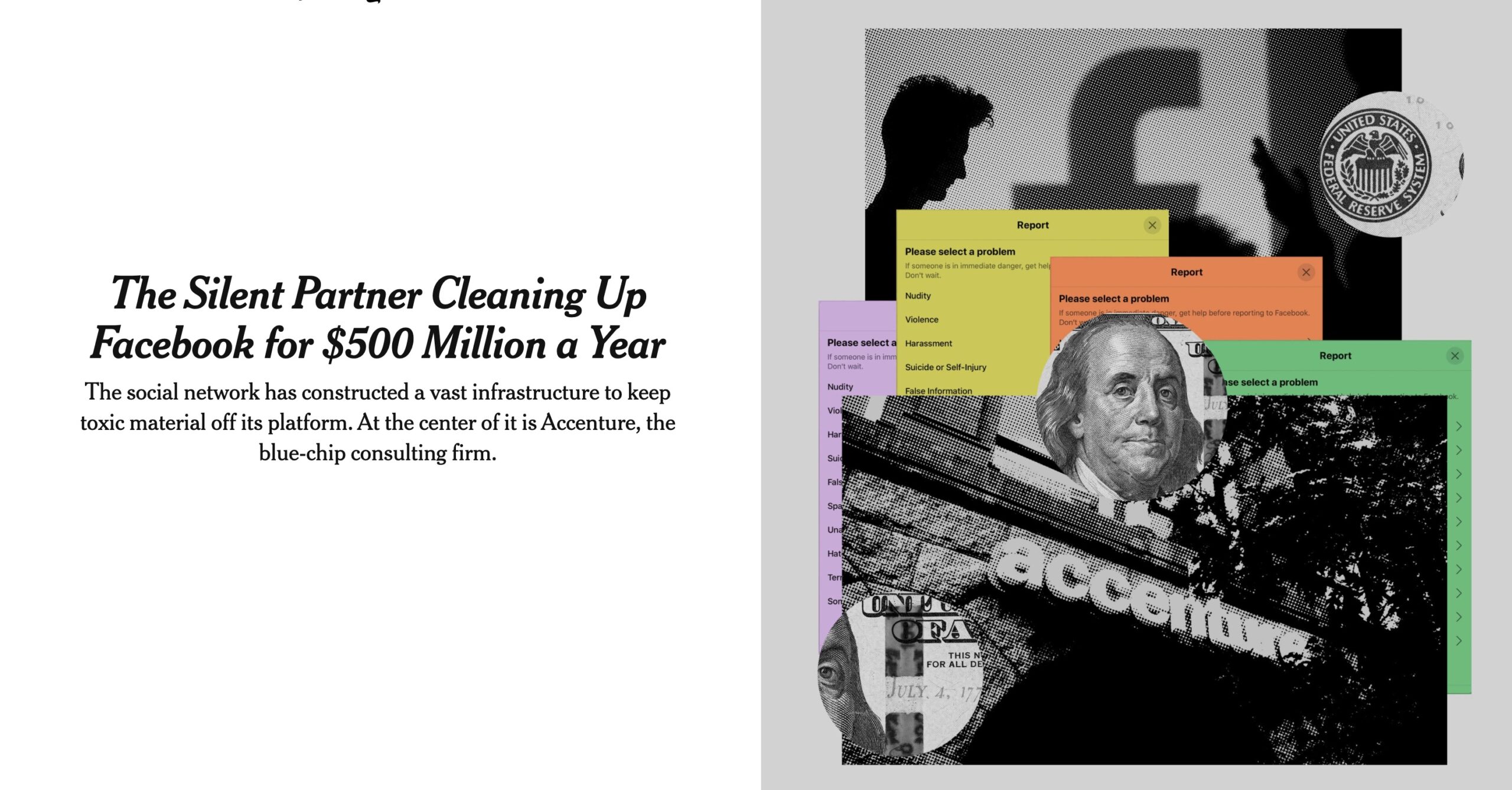

Yesterday, the New York Times published a piece called “The Silent Partner Cleaning Up Facebook for $500 Million a Year.” Was it actually news, and should it change anything?

What’s the news here?

Here’s what’s in the article, most of which is far from shocking. Much of it repeats the revelations in an excellent exposé by Casey Newton for The Verge in 2019.

- People try to post horrifying things on Facebook (“violent and hateful content”). We knew this. The article includes shocking examples of child rape and animal torture, but we already knew horrible people were posting this kind of stuff from the piece in The Verge.

- Mark Zuckerberg has attempted to use AI to do content moderation, but it is insufficient. The Verge wrote about this, too.

- Facebook outsources content moderation. Old news.

- Accenture, the massive consulting firm, is the largest contractor providing moderation. I hadn’t heard this. But I’m not sure why I should care. Are we going to boycott Accenture for doing something that needs to be done, or for abusing its employees doing the work?

- Accenture’s contract is worth $500 million per year. I did not realize the job was this big. But Facebook’s net income for 2020 was $29 billion. Its total costs and expenses were $53 billion. So content moderation is about 1% of expenses, and reduces profit by 2%. It’s a big number, but not that surprising in the context of Facebook’s business. (The article claims this is “a small fraction of Accenture’s annual revenue,” which is likely in the tens of billions.)

- Accenture uses its Facebook contract to gain currency in Silicon Valley. Mildly interesting, but only to people interested in Silicon Valley politics.

- Facebook keeps changing its goals, targets, and policies. Not surprising to me. How about you?

- The start of the moderation issues was Facebook’s nudity ban in 2007. Makes sense.

- Accenture has almost 6,000 moderators working everywhere from Manila to Texas. Also makes sense.

- Moderators start at $18 per hour, but Accenture bills them at $50 per hour. That’s what consulting firms do. It also reflects the value to Facebook of making this be somebody else’s problem.

- Accenture’s staff suffer mental health issues due to the horrifying nature of what they need to moderate. This is true, and it is very sad. It was also the central point of the Verge exposé.

- Accenture’s management is conflicted about the contract. But they didn’t do anything. So why does this matter?

- Facebook settled a suit with moderators for $52 million, even though they worked for Accenture. The Verge reported this in May of last year.

Toting this all up — except for the $500 million Accenture contract, it’s primarily warmed over stuff we’ve heard before, mostly from excellent reporting from The Verge. I imagine Casey Newton is wondering why the Times is writing about what he already reported.

What should be different?

What should we do with this information? Should anything be different because of what we read?

If you assume Facebook has a right to exist:

- Should it do content moderation? Clearly, there’s a need, because people post horrifying things.

- Should it outsource the moderation? I don’t see why this is a problem. It’s terrible work, but why does it matter whether the people doing it are Facebook employees or employees of a contractor?

- Is $500 million a shocking amount to pay for this? No.

- Should Accenture do this work? Frankly, I don’t care. I don’t hold it against Accenture that they took this contract.

- Should Accenture provide better guidance and counseling for moderators and pay them more? Yes.

The problem here is that Facebook’s very existence magnifies the reach of lies, disinformation, and the worst of human depravity. That’s a problem with Facebook, and with social media in general.

Outsourcing moderation doesn’t make that problem go away. That’s not Accenture’s fault. The problem here is rooted in the interaction between evil and technology, which is a way bigger issue than Accenture’s $500 million payday.

I do not see a need to moderate content. But I could see how pure spam clogs the wheels of the money machine.

Why, do you think, is there no successful social network without content moderation?

What would happen to Facebook if it allowed everything to spread willy-nilly, including child pornography, Russian disinformation, fake news about horse dewormers, and libel regarding political candidates?

Facebook exists to sell users to advertisers. Anything else the company does is incidental. Thanks to the nature of the platform, the company has volumes of data on each user that should allow pinpoint targeting of products and services to each user’s interests and activities. But the advertisements are so seldom relevant, much less compelling, that I assume Facebook only promises impressions—not conversions. To deliver such poor execution when so much more is possible would suggest two unflattering things: that Facebook is selling worthless leads, and that advertisers still willingly buy them. As long as those things remain true, content and its moderation will receive only the attention needed to avoid criminal charges that might open the door to regulation. Whether it’s a Facebook employee, an Accenture contractor, or some AI based on machine learning, moderation is only concerned with liability for the company—not truth, ethics, or morals. The Facebook business model has declared those concepts obsolete.